Why OpenClaw Feels Different

In the AI adoption curve, are personal assistants this time different?

Hello Everyone,

A little introduction -

OpenClaw is a free and open-source autonomous artificial intelligence agent that can execute tasks via large language models (LLMs), using messaging platforms as its main user interface. It was first released as Clawdbot on November 24, 2025, by Austrian developer Peter Steinberger. He announced he was joining OpenAI on February 14th, 2026. Between those two dates OpenClaw went semi-mainstream, but also a bit viral in China.

OpenClaw is one of those projects that went from a lot of stars on Github to a viral story of what AI agents can do for normal people. People have described OpenClaw in 2026 comparing it to what DeepSeek was in 2025.

Since its late 2025 launch, OpenClaw has become one of the fastest-growing open-source projects in history.

I’ve read many articles about OpenClaw but wanted to introduce someone who knows a lot about it in Vinoth Govindarajan. He writes the Agent Stack. Vinoth is a Systems engineer at OpenAI, open-source contributor, and co-author of Engineering Lakehouses with Open Table Formats, and is adept at explaining how modern AI agents and data systems actually work in production.

The Agent Stack

If you read this article and like how it’s explain please read the following too. You can also read the OpenClaw Unboxed publications. This is not a sponsored post, but some of my readers might be curious about OpenClaw and this is an easy and great way to learn more.

Most of these articles were written in March, 2026:

If you want to understand OpenClaw Architecture here is the Series 🦞

Homework after this post:

OpenClaw Architecture - Part 1: Control Plane, Sessions, and the Event Loop

The Agent Stack - Part 2: Foundation Infrastructure, Models, and Inference

OpenClaw Architecture - Part 3: Memory and State Ownership

OpenClaw Architecture - Part 4: Security Boundaries, Tool Risk, and Authorization

OpenClaw Architecture - Part 5: Tools, Plugins, and Capability Boundaries

OpenClaw Architecture - Part 6: Reliability, Observability, and Evaluation

A week ago Peter shared his journey on a Ted Talk that you can watch here: the talk is about 17-min.

How I Created OpenClaw, the Breakthrough AI Agent | Peter Steinberger | TED

Finally if you are looking for an easy to read an intro into a Systems Map of Modern Agent Infrastructure, read this.

Vinoth Govindarajan writes about AI systems, infrastructure, and the changing interface between software and everyday work.

There are a lot of great writers on this platform like Vinoth Govindarajan who write about AI agents. There are so many great OpenClaw guides, and the ecosystem is improving quickly. I asked Vinoth for his take on OpenClaw architecture assuming most of my readers are non-technical.

Vinoth Govindarajan is a Member of Technical Staff at OpenAI, where he works on core data infrastructure for large-scale AI systems and agent-facing platforms. Before OpenAI, he was a Staff Software Engineer at Apple and Uber, where he built next-generation data platforms, incremental ETL systems, and real-time pipelines using technologies including Iceberg, Spark, Trino, Flink, and Hudi.

If you’re skeptical about OpenClaw, you might enjoy this (March, 2026). I hope you enjoy the topic and the incredible guest contributor’s post. Let’s try and picture what AI will soon be able to do for us in the near future. ❯❯❯❯

Finally if you think OpenClaw is worth knowing more about and want to help others learn more about it and this exploration in particular, consider sharing:

Why OpenClaw Feels Different

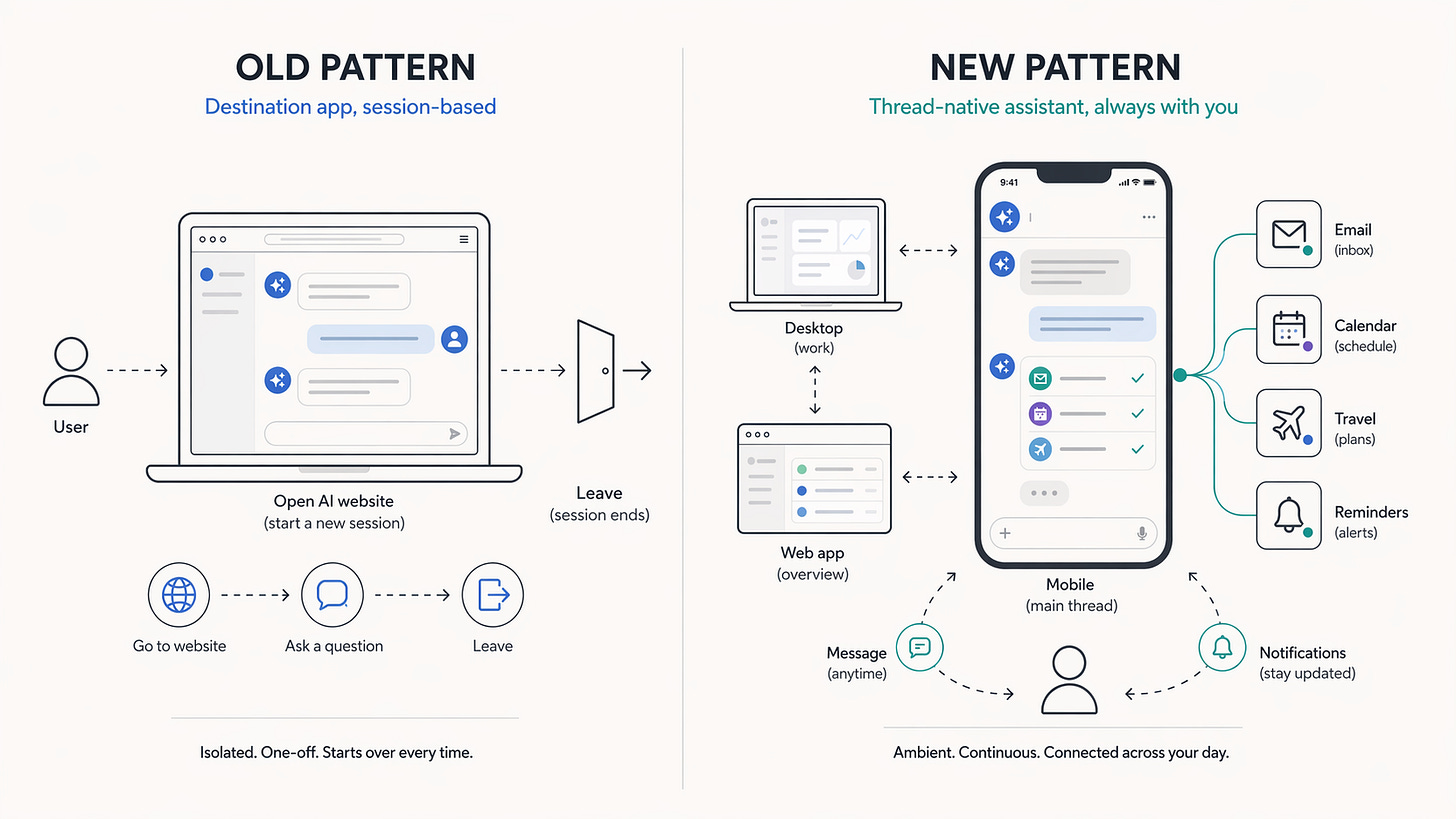

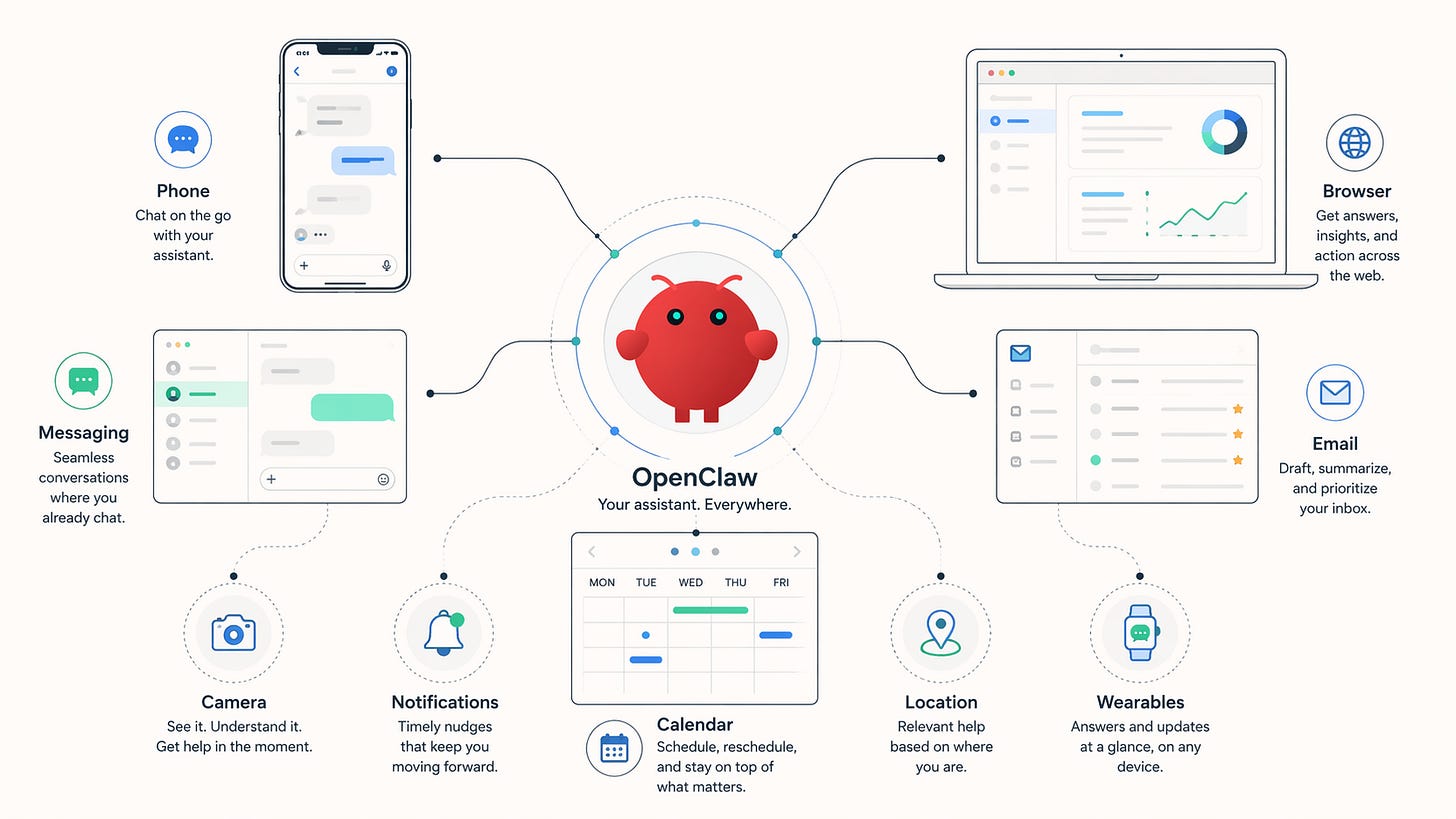

It is not just another place to chat with AI. It is an early glimpse of AI moving into the threads, devices, and routines people already use.

You are standing in an airport line. Your inbox is backing up. A meeting needs to move. The old workflow is familiar: open the laptop, find the right tabs, start triage. OpenClaw points to a different one. Send a message in WhatsApp or Telegram, ask the assistant to handle the first pass, and keep walking. That is not a metaphor the project’s fans invented after the fact. OpenClaw’s own site pitches exactly this shape of product: an assistant that can help with email, calendar, flights, and everyday life admin from the chat apps you already use.

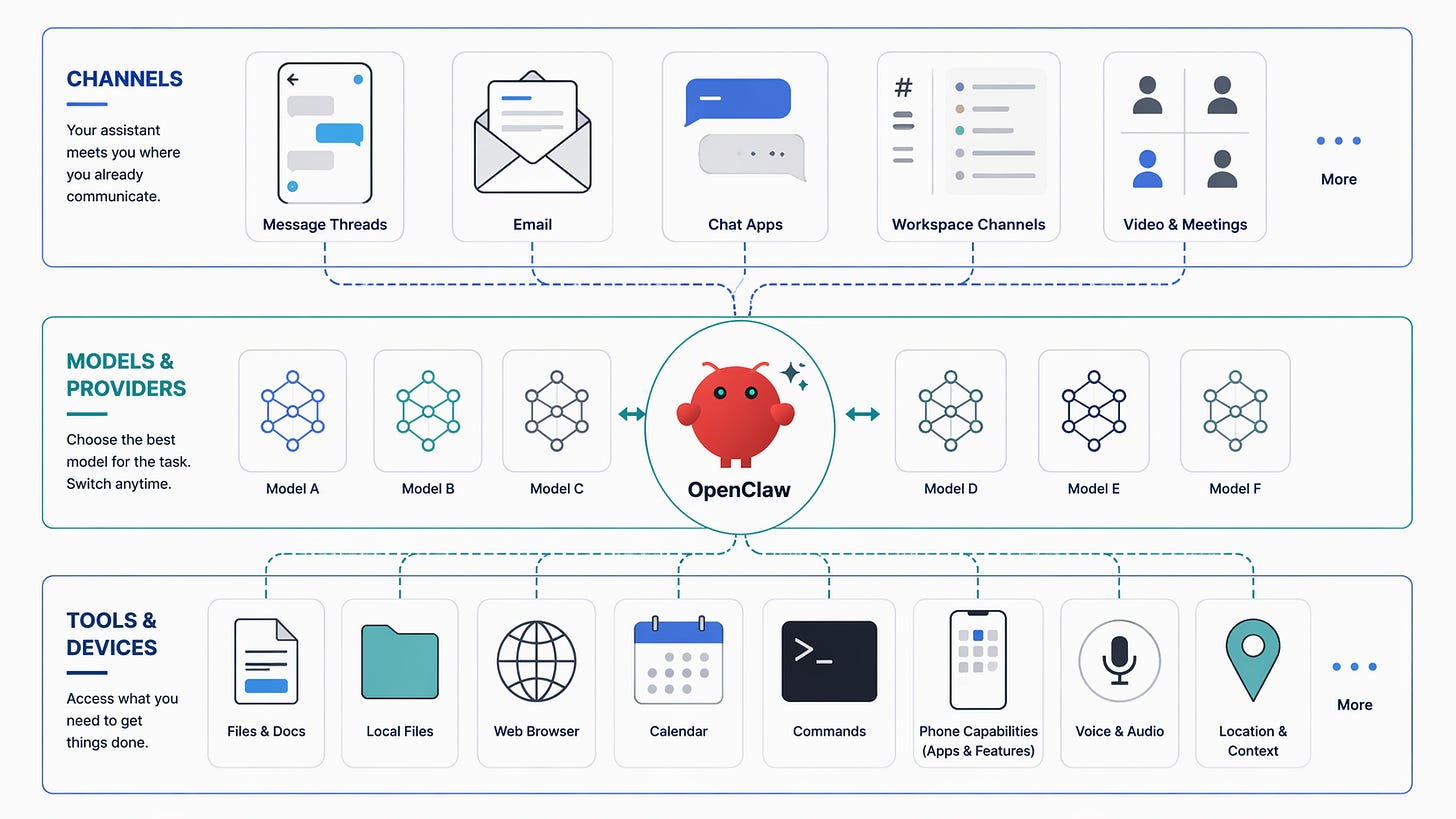

My read is that OpenClaw matters less as a story about smarter answers and more as a story about interface. It moves AI out of a destination app and into a message thread, gives it continuity across surfaces, and makes it feel reachable from the same places people already check all day. OpenClaw’s docs describe a self-hosted gateway that acts as an always-on control plane, while the assistant shows up across messaging surfaces like WhatsApp, Telegram, Slack, Signal, iMessage, Discord, and WebChat.

What changes once AI leaves the chat box: ✨

the message thread becomes the front door

context can survive across surfaces

the assistant can check in first

the phone becomes part of the interface

the whole thing is less trapped inside one company’s product boundary

The thread becomes the front door

Most people still experience AI as a place they go to. They open a site, start a conversation, get an answer, and leave. OpenClaw is organized around a different assumption. Its docs say the assistant can live across a long list of chat surfaces, and the personal-assistant guide is built around a dedicated WhatsApp setup that behaves like an always-on assistant. That matters because the message thread stops being a side channel and starts becoming the main entry point.

The session model strengthens that feeling. OpenClaw routes conversations into sessions based on where they came from, and by default all direct messages share one main session for continuity. Identity links can map the same person across channels so a conversation on one surface can feel like a continuation of the last exchange somewhere else. That is a very different experience from opening a destination app and starting fresh.

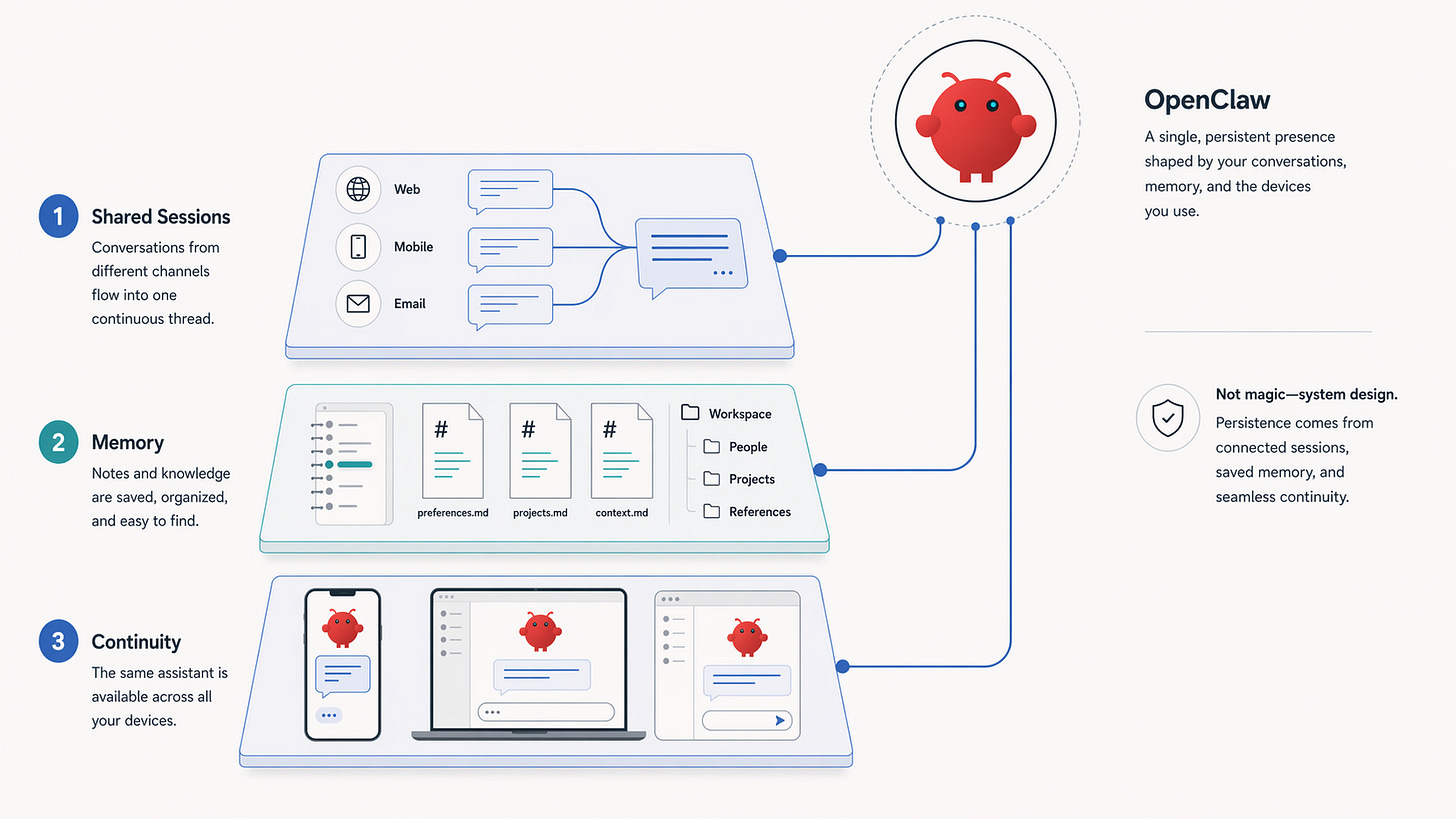

Continuity changes the relationship

The biggest difference is not that OpenClaw can answer questions. Plenty of systems can do that. The more interesting difference is that it can carry context forward in a way that feels less disposable. OpenClaw’s memory system is almost deliberately unglamorous. The docs describe long-term notes in MEMORY.md and daily notes in memory/YYYY-MM-DD.md. The FAQ makes the point even more plainly: memory is just Markdown files in the agent workspace.

That matters because it keeps the claim grounded. This is not some mystical idea of memory where the model has silently absorbed your life. It is continuity built from stored notes, shared sessions, and deliberate re-use of context. In other words, the feeling of persistence comes from state management, not magic. The docs support the mechanics; the stronger claim, that this changes the user’s relationship to the assistant, is interpretation, but it is an interpretation the product design clearly invites.

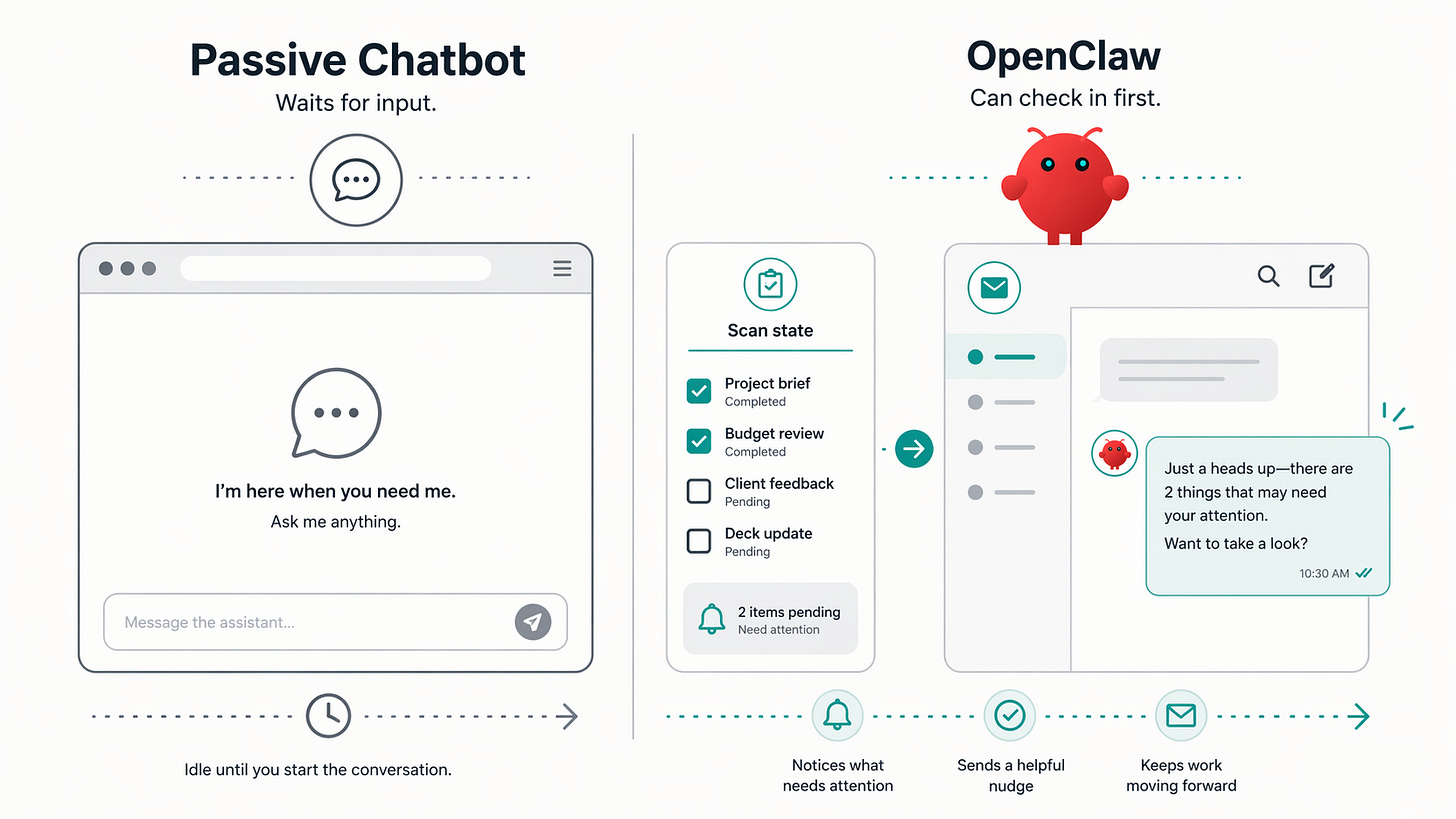

The assistant can check in first

This is where the product starts to feel genuinely different. OpenClaw has a heartbeat system, a scheduled main-session turn that runs on a cadence, reads a small HEARTBEAT.md checklist if you have one, and can optionally route a message to the last contact. The docs say the default interval is 30 minutes in the common case, with configurable active hours and a default behavior that drops empty runs with HEARTBEAT_OK.

That does not sound dramatic until you think about what it means in practice. A chatbot waits. A heartbeat system makes lightweight proactivity possible. The assistant can review what is pending, notice that something needs attention, and sometimes reach out before you remember to ask. That is a bigger emotional shift than most AI writing acknowledges. The difference between passive and proactive is not mystical. It is about cadence, continuity, and permission to speak first.

OpenClaw’s own setup guide treats this power carefully. It recommends disabling heartbeats until you trust the setup. That restraint is important, because proactive assistants can easily become noisy or intrusive if they are badly configured.

Your phone stops being just a remote control

The idea gets more interesting when the phone stops being only the place you send messages from. OpenClaw’s nodes are companion devices, macOS, iOS, Android, or headless, that connect to the gateway and expose capabilities like Canvas, camera, notifications, system actions, and device commands. The iOS docs list Canvas, screen snapshot, camera capture, location, talk mode, and voice wake. The Android docs say the app is not publicly released yet, but the source is available and the node can expose chat, history, Canvas, camera, notifications, and other device surfaces.

This is where the post-desktop idea starts to feel earned. The assistant is no longer confined to a webpage. The thread, the phone, the browser dashboard, and the companion device start to feel like parts of one interface. You can still use a browser when you want to. It just stops being the only doorway.

Openness matters because the assistant is not trapped

Another reason OpenClaw stands out is that it is not designed as a closed assistant inside one company’s product boundary. The docs describe it as self-hosted, open source, and model-agnostic. The FAQ frames the value proposition as local-first control over sessions, memory, tools, and channels, with support for hosted and local model options.

For broad readers, the important point is not ideology. It is practical freedom. A cross-channel assistant becomes more useful when it is free to use the best available model, run where you want, and connect to ordinary software surfaces instead of waiting for every workflow to be turned into a polished first-party feature. OpenClaw’s tools docs make that logic explicit: everything the agent does beyond generating text happens through tools, which is how it reads files, runs commands, browses the web, sends messages, and interacts with devices.

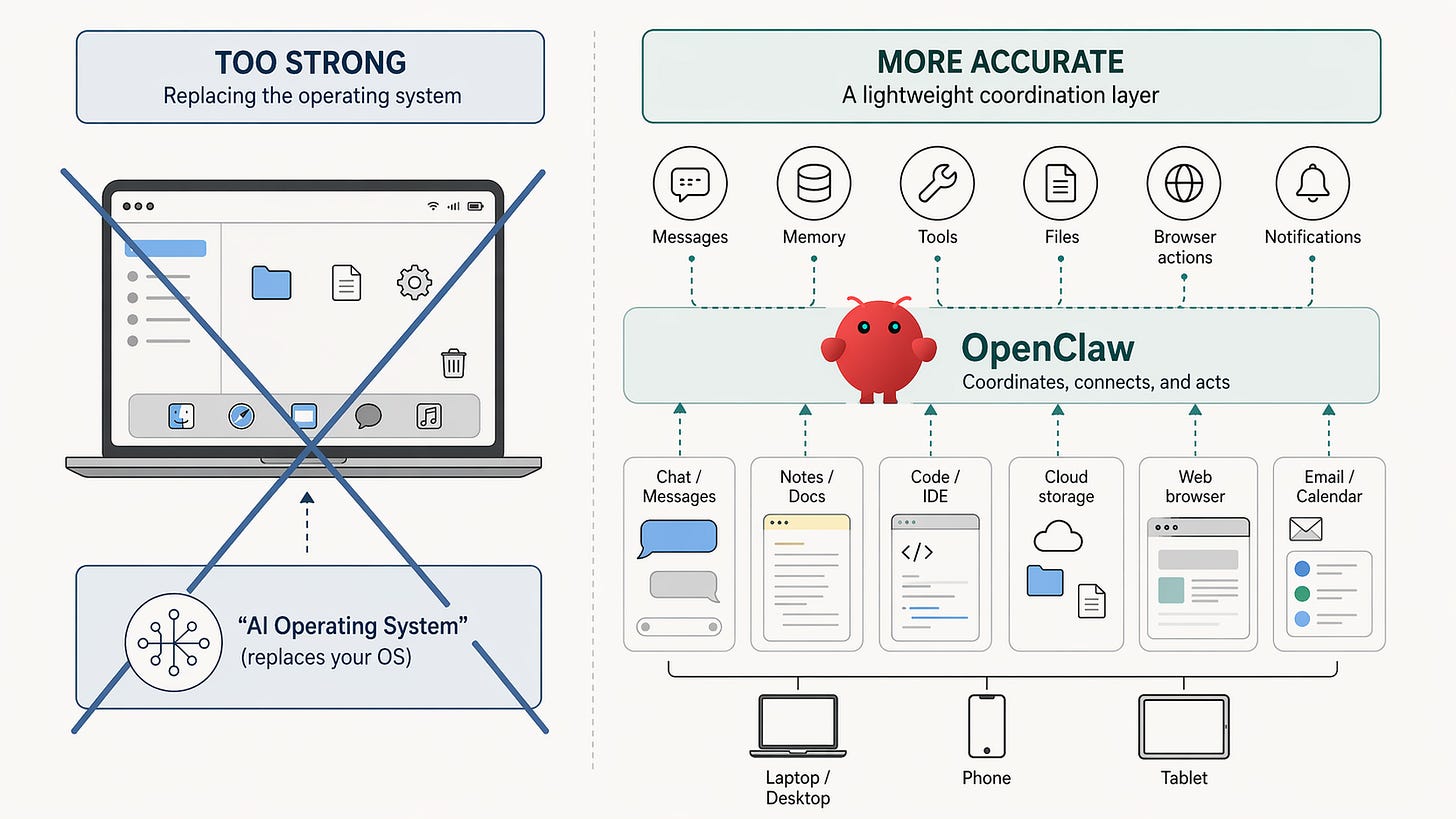

A lightweight operating layer, not a new operating system

This is where the “operating system” language needs discipline. Taken literally, it is too strong. OpenClaw does not replace iOS, Android, macOS, or Windows. It does not own the hardware or the app model. If you say “new operating system” too loudly, it starts to sound like a slogan.

Used more carefully, the metaphor helps. My read is that OpenClaw is starting to look like a lightweight coordination layer above the software you already use. The gateway owns sessions, routing, and channel connections. Memory persists in the workspace. Tools connect the assistant to files, browsers, messages, and devices. Nodes extend that behavior onto phones and other machines. Put together, that is not a new operating system in the literal sense. It is a thinner, more personal layer that makes software feel reachable through conversation instead of only through menus and app windows.

That is the strongest version of the claim, and it is strong enough.

The limits make the signal more credible

The limits are not side notes. They are part of what makes the whole thing believable. OpenClaw’s personal-assistant guide tells users to start conservative, set allowFrom, use a dedicated WhatsApp number, and disable heartbeats until they trust the setup. The security docs are blunt that there is no perfectly secure setup, and that if multiple untrusted users can message one tool-enabled agent, they are effectively sharing the same delegated authority for that agent.

The FAQ adds another useful correction: OpenClaw’s state can live locally on the gateway host, but external services still see what you send them. Model providers still see prompts sent to their APIs, and chat platforms still store the message traffic they handle. Self-hosting changes the control model, but it does not make the rest of the stack disappear.

It is also still early. The docs say OpenClaw is for developers and power users. The iOS app is an internal preview. The Android app is not publicly released. That matters, because this piece does not need to pretend the consumer’s future has already arrived. The more accurate claim is also the more interesting one: OpenClaw is an unusually clear signal of where personal AI may be heading. The chat box is not going away. But it may stop being the place where AI lives.

Sources and references

OpenClaw Publication on Substack

You can follow OpenClaw on Twitter/X. You can see Peter Steinberger on Substack here.

Short bio

Vinoth Govindarajan writes about AI systems, infrastructure, and the changing interface between software and everyday work. He has built large-scale data platforms, contributed to open-source projects, and is especially interested in how agent systems move from demos into usable products.

Vinoth Govindarajan is a Member of Technical Staff at OpenAI, where he works on core data infrastructure for large-scale AI systems and agent-facing platforms. Before OpenAI, he was a Staff Software Engineer at Apple and Uber, where he built next-generation data platforms, incremental ETL systems, and real-time pipelines using technologies including Iceberg, Spark, Trino, Flink, and Hudi.

Vinoth is the co-author of Engineering Lakehouses with Open Table Formats and writes about AI systems, The Agent Stack, a systems-first publication on production AI agents and data infrastructure. His work focuses on control planes, state, memory, tool boundaries, reliability, and the architecture patterns that make agent systems more useful, predictable, and trustworthy in practice.

Author’s Work

Visit The Agent Stack Archives here.

To also read on OpenClaw - Editor’s links

OpenClaw Architecture, Explained

OpenClaw Use Cases That’ll Make You Rethink What AI Agents Can Do

How to Use OpenClaw as an Engineering Leader

How to Make Your OpenClaw Agent Useful and Secure

How to Harden OpenClaw Security: Complete 3-Tier Implementation Guide

Thanks for reading.

We need to watch out for Codex, which is not OpenAI’s version of OpenClaw in a literal sense, but is OpenAI’s developer-sector expression of the same deeper shift: AI is moving from a destination chat box into a persistent, tool-using, workflow-native coordination layer.

Codex is increasingly moving toward this logic, but in a different domain. OpenClaw points toward the future of a general-purpose personal assistant embedded in everyday communication channels and device routines. Codex points toward the same structural transition inside software engineering. It is becoming OpenAI’s vertical, developer-focused version of the OpenClaw idea: not a chatbot, but an agentic coordination layer embedded into existing workflows.

The similarity is not that both are “chat products.” The similarity is that both are pushing AI out of the isolated chat box and into something closer to a task-control layer, a persistent context layer, a tool-execution layer, and a cross-interface entry layer. OpenClaw does this across messaging apps, phones, devices, and everyday administrative routines. Codex does it across codebases, Git, terminals, tests, pull requests, cloud environments, and local development workflows.

This is why Codex should no longer be understood simply as intelligent autocomplete inside an IDE, or as a coding Q&A window inside ChatGPT. It is starting to become an engineering task agent layer organized around the actual infrastructure of software work: repositories, branches, diffs, test suites, terminals, review flows, PRs, cloud sandboxes, and local environments. Codex is moving AI from “answering coding questions” toward managing software engineering task flows. That is the same product philosophy behind OpenClaw’s move from “answering in chat” toward “managing personal life, devices, and workflow tasks.”

Of course, Codex remains much more vertical for now. Its current center of gravity is clearly software engineering: codebases, IDEs, GitHub, terminals, PRs, testing, diff review, and cloud sandboxes. But I suspect this may be only the first mature expression of a broader product pattern. Software engineering is an unusually suitable starting point because tasks are structured, environments are reproducible, outputs are inspectable, and results can often be validated through tests, diffs, and human review.

That is why Codex may ultimately matter beyond coding. It may be the showroom for OpenAI’s larger agent strategy. The same architecture does not apply only to code. It can also apply to legal documents, financial analysis, sales operations, customer support, research assistance, internal knowledge management, procurement, and supply-chain coordination. The deeper shift is not from one coding assistant to another. The deeper shift is from conversational AI as an answer engine to agentic AI as a workflow-native execution layer.